Latest micro-posts

You can also also view the full archives of micro-posts. Longer blog posts are available in the Articles section.

Nushell isn’t exactly a shell, at least not in the traditional Unix sense of the word. Nushell is trying to answer the question: “what if we asked more of our shells?” — The case for Nushell

With recent support for data frames, it becomes an interesting challenger for tiny on-the-fly data wrangling scripts. Beware that it relies on polars-arrow which (most of the time) require a recent stable Rust. Also, if you encounter an E0034 (“multiple applicable items in scope”) when compiling polars-related dependencies, try adding --locked to cargo install. When you’re done, enjoy the power of data frames right into your shell!

With my last timing for processing a 30 Mb CSV file, loading the file in Nu shell is a breeze:

~/tmp> timeit {dfr open ./nycflights.csv}

70ms 682µs 766ns

~/tmp> timeit {open ./nycflights.csv}

1sec 194ms 477µs 961ns

First instruction uses dfr open while the second instruction relies on builtin open command.

♪ Benoît Delbecq · Anamorphoses

Until now when reading my feeds using Newsboat, I was simply filtering unread feeds, sorted by count using a dedicated keybinding (bind-key S rev-sort, which allows me to simply press Su to get the desired output). Now, I learned about l which filters unread feeds. It is quite handy since it acts as a filter and the list of unread feeds empties itself as you read articles.

Understanding Automatic Differentiation in 30 lines of Python: A simplified yet effective illustration of AD in the context of neural network training. #python

My thoughts on Haskell in 2020. tl;dr Stick to Simple Haskell. #haskell

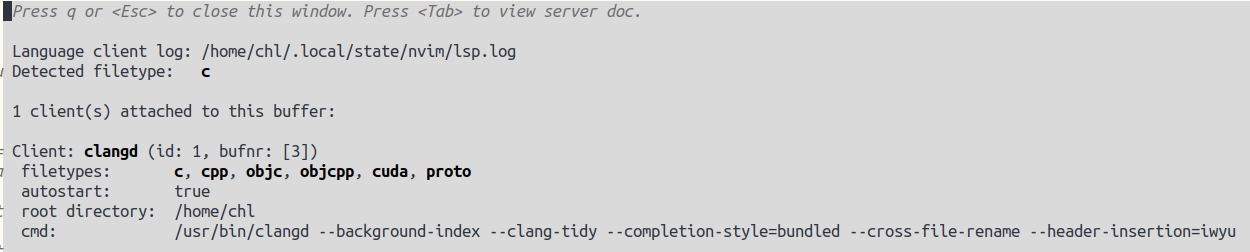

A Modern C Development Environment: A complete step by step tutorial to develop using Docker and GitHub workflow. Note that clang-tidy is available in ALE for Vim. If you use Neovim, the the clangd LSP already uses clang-tidy, or you can just add --clang-tidy to clangd parameters. Extra options can also be added in a .clang-tidy file. See also clang --help-hidden.

As a sequel of one of my last benchmarking post, here’s a rough estimate of Polars vs. Datatable performance when reading a 34 Mb file (NYC Flights Dataset, available from avrious sources; see e.g., this post):

import time

import datatable

import polars

tic = time.time()

flights = polars.read_csv("nycflights.csv", null_values="NA")

toc = time.time()

print(f"Elapsed time: {(toc-tic) * 10**3:.2f} ms")

## => Elapsed time: 54.61 ms

tic = time.time()

flights = datatable.fread("nycflights.csv")

toc = time.time()

print(f"Elapsed time: {(toc-tic) * 10**3:.2f} ms")

## => Elapsed time: 57.60 ms

Polars also offers a lazy CSV reader using scan_csv, which is way faster (1.22 ms). #python

Identity Beyond Usernames. For random UUIDs, see also Fixed Bits of Version 4 UUID.