Latest micro-posts

You can also also view the full archives of micro-posts. Longer blog posts are available in the Articles section.

My Neovim configuration has been stable for over a year. I hardly ever touch it anymore, except to correct a few unnecessary bindings or fix some deprecations following API changes. And guess what: Treesitter has been revamped, full of incompatible changes in the main branch on GitHub. Treesitter-textobjects also switched from the master to the main branch, leaving incremental selection orphan. I’ll keep my current config for the time being, I’m too lazy today, but in the light of this issue, I brew pin neovim at 0.11.6. #vim

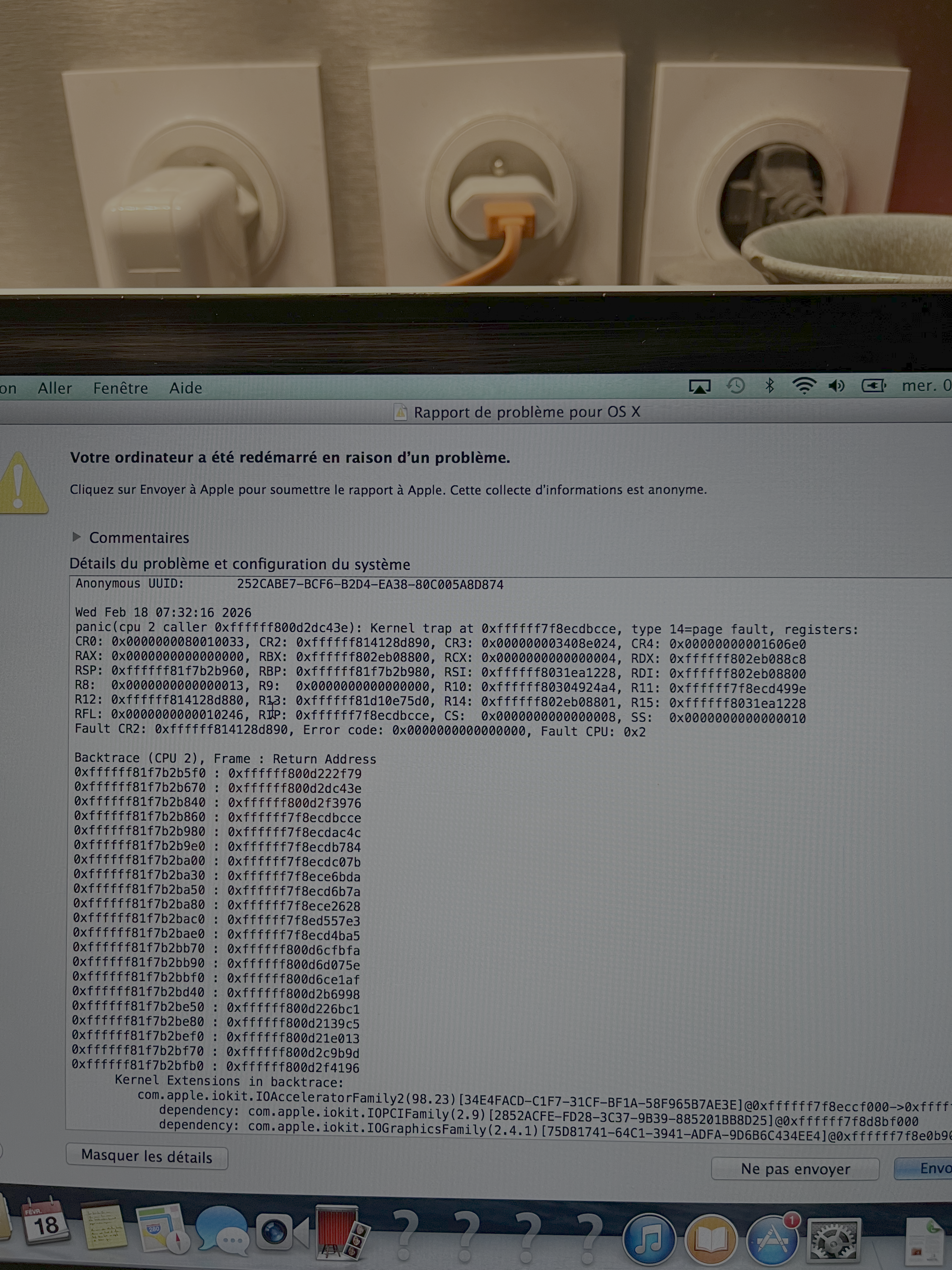

Using a PowerBook G4 Today: It was a pleasure to see such an old beast which I found gathering dust at the back of a cupboard still up and running. Sadly, it is powered by Mac OS X 10.3 (not even the latest update). Maybe I’ll need to install OpenBSD to give it a bit of a boost, but I’m afraid I will loose wireless connectivity and current graphics capabilities. #apple

10-202: Introduction to Modern AI. I especially like the AI policy section: “(…) but we strongly encourage you to complete your final submitted version of your assignment without AI.”

My hope is that there remains a primordial spark, a glimpse of genius, to rediscover, to reconnect to - to serve not annual trends or constant phonification, but the needs of the user to use the computer as a tool to get something done. — Welcome (back) to Macintosh

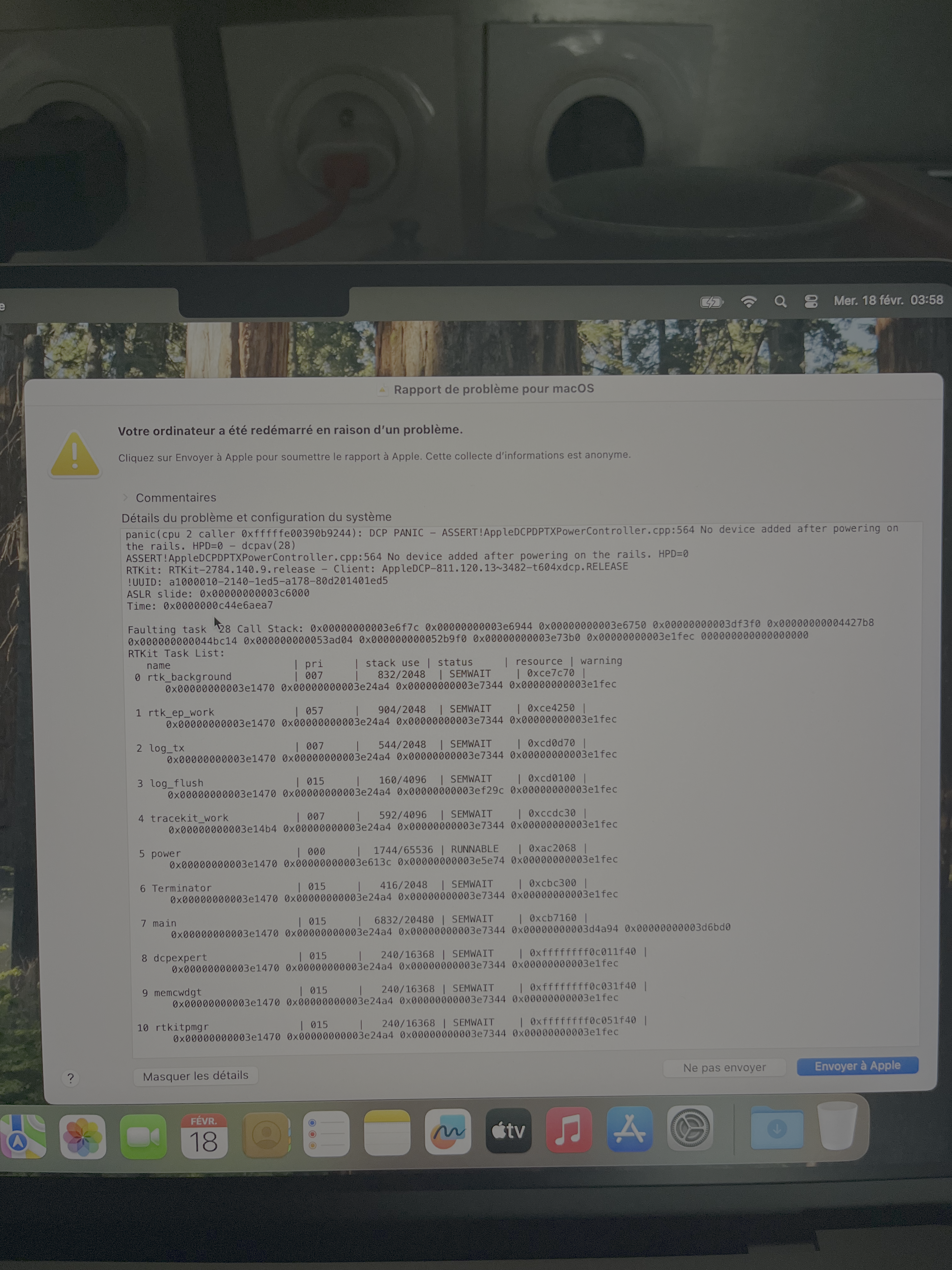

Back to my Mac. This happended to me two weeks ago.

And sadly, my old MBP onto which I booted Ubuntu got a panic attack after I reinstalled OS X…

Git’s Magic Files: Other Conventions and Beyond Git are worth keeping in mind.

Ivry again, Feb. 2026

Ivry again, Feb. 2026

An invitation to a sparse Cholesky factorisation. Left-looking Cholesky factorization in two lines:

for j in range(0,n):

L[j,j] = sqrt(A[j,j] - L[j, 1:(j-1)] * L[j, 1:(j-1)]')

L[(j+1):n, j] = (A[(j+1):n, j] - L[(j+1):n, 1:(j-1)] * L[j, 1:(j-1)]') / L[j,j]

Who Needs Backpropagation? Computing Word Embeddings with Linear Algebra: numerical word representations are built using simple frequency counts, a little information theory, and linear algebra. #python